This is part three of a month-long series on VR. Check out parts one and two.

There’s nothing new about Virtual Reality. It’s not that it’s an obscure technology that has been under dark basements all this time. I remember playing Heretic and Doom on VR systems in the 90’s; but now, thanks to many factors, VR tech is (almost) reaching the level of being accessible to the common man.

Currently, there’s a fistful of companies that are trying to push their own tech on the VR dream. Some are even located between the blurred lines of the industry, like Microsoft HoloLens that is more Augmented Reality than Virtual Reality, but follows the momentum of the Not-Real-Reality zeitgeist. Most of these efforts will die, and that’s ok. Everyone’s pitching their own ideas, and at the end, the most convenient and popular will survive, or maybe not (looking at you, VHS and Blu-Ray).

Nintendo’s Virtual Boy was released in 1995, and was discontinued six months later.

How do we interact with VR?

Whoever wins the arms race, what we really expect to reap are behavior standards. That is what concerns us as experience architects. Right now, all VR efforts have pretty much only one behavior in common: You can turn your head and perceive the virtual-augmented environment around. All the other basic elements of the experience and how you interact (if you can) with this environment vary. We are not talking about some basic button to push here, we are talking about stuff that aims to compete with reality itself.

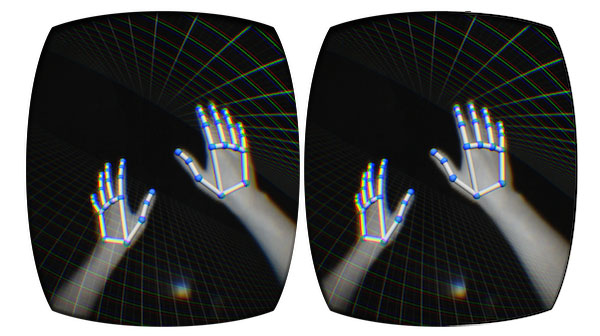

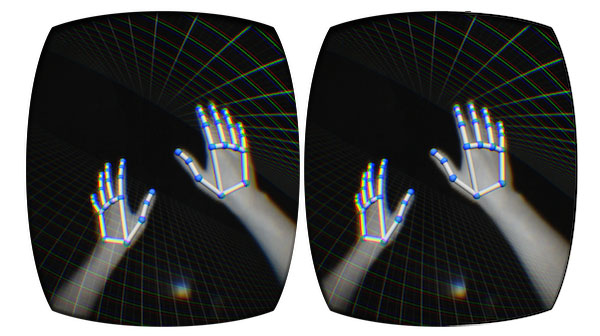

LeapMotion attaches to Oculus Rift and tracks your hand movements.

VR needs an equivalent of Xerox mouse or Apple’s touch gestures on the first iPhone. An easy way to interact with the world that VR is promising, that is easy to use and quick to learn. One will think that because VR aims to involve the entire self, body gestures are an easy way to go on this, after all, finger gestures quickly became a standard, so this should be like the next “step”. But time has proven that it’s not really cool to waste your energy moving your arms around to do a simple action that can also can be done with a simple finger movement, like when we tap or click on a mouse.

Right now there are a lot of options that aim for the title of standard, or at least the better option. From complete stations that let the user feel like he’s walking in a single place to motion sensors and simple hand controls. All of those have been borrowed from the world of videogames; a world that has been dreaming with VR for decades.

Since the Nintendo Wii made popular the revolutionary “Wiimote”, it seemed that it was possible to create a decent body interaction with potential VR systems. But that’s for games, and you can only play boxing like that for some time before you get tired. Some experiences require less physical immersion and more of a sensorial experience.

Start Simple

If VR wants to aim to any kind of public and be a platform for a wide arrange of products, it needs to start simple before it gets complex. I bet somebody will want the complete “Lawnmower Man” battle station at home, but maybe companies need to aim low to hit high for now, stop pretending to replace reality itself.

Still, VR is still a technology with a potential to be as immersive as anything anyone has ever experienced, so simple interactions still need to be on the level of the potential that the device is promising. We don’t want to break immersion using the same Xbox controller we use for games on a flat screen. We want interactions that can go from a jump to the movement of a finger, and compact and precise enough so you don’t have to take the kids out of their room to build a VR place where you can do all that.

Maybe Facebook with the Oculus have another vision of what the market could want more than Valve+HTC, but for now, what we need is to start seeing the results of all those ideas on the public and see how they (and we) react.

In these infant times of an old technology, we can’t demand to have epiphanies of how things are going to be forever. We expect evolution, and as designers, is our duty to give those ideas challengers and opportunities, embrace chaos and don’t be afraid to fail. It’s time to aim for the moon, we will reach for the stars next… from the comfort of our sofas… using our VR headsets.

Let us know what you think about User Experience in VR by tweeting @zemoga!